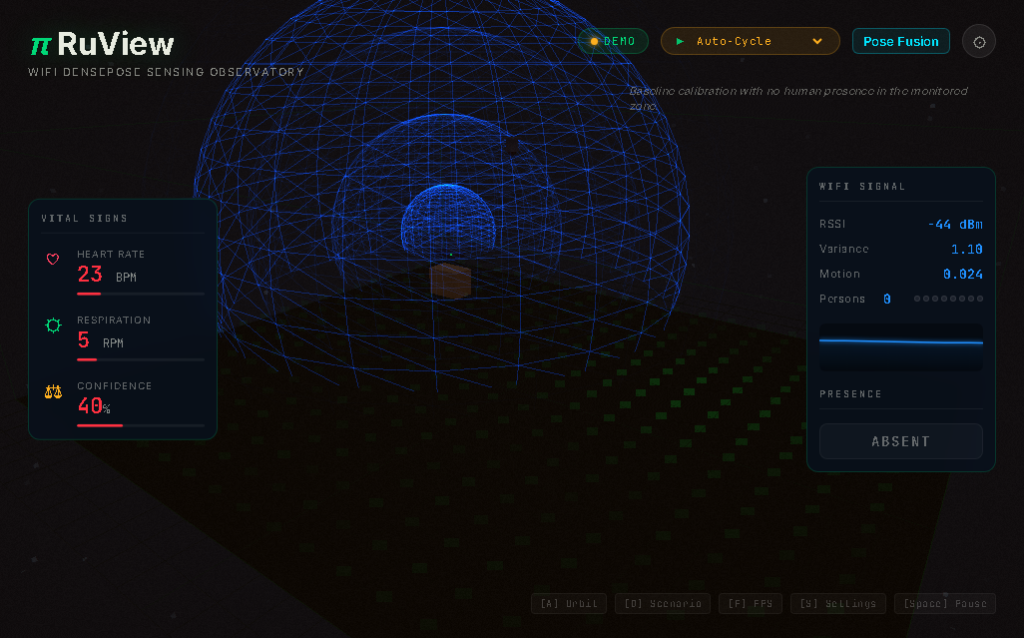

A fascinating new open source project called RuView is turning commodity WiFi signals into real-time human pose estimation, vital sign monitoring, and presence detection鈥攁ll without a single pixel of video.

The project, which has garnered over 41,000 stars on GitHub, uses Channel State Information (CSI) disturbances caused by human movement to reconstruct body position, breathing rate, heart rate, and presence in real time using physics-based signal processing and machine learning.

How WiFi DensePose Works

WiFi routers flood every room with radio waves. When a person moves鈥攐r even breathes鈥攖hose waves scatter differently. RuView reads that scattering pattern and reconstructs what happened.

The system analyzes CSI on channels 1/6/11 via a TDM protocol using an ESP32 mesh of 4-6 nodes. Multi-band fusion creates 3 channels times 56 subcarriers equals 168 virtual subcarriers per link. Multistatic fusion combines multiple links with attention-weighted cross-viewpoint embedding.

After signal processing through Hampel, SpotFi, Fresnel, BVP, and spectrogram analysis, an AI backbone using attention networks, graph algorithms, and smart compression produces 17 body keypoints plus vital signs and a room model.

No Cameras, No Problem

Unlike traditional computer vision systems, RuView operates entirely from radio signals and self-learned embeddings at the edge. The self-learning system bootstraps from raw WiFi data without requiring labeled training sets or cameras to get started.

This approach offers significant advantages: it works through walls, furniture, and debris; operates in total darkness; avoids GDPR and HIPAA imaging regulations by design; and costs a fraction of traditional camera systems (- per zone using existing WiFi or ESP32 versus -,000 per zone for cameras).

Real-World Applications

The use cases span healthcare, retail, office space management, and emergency response:

- Elderly care and assisted living: Fall detection, nighttime activity monitoring, breathing rate during sleep without wearable compliance

- Hospital patient monitoring: Continuous breathing and heart rate for non-critical beds without wired sensors

- Retail occupancy and flow: Real-time foot traffic, dwell time by zone, queue length without cameras

- Emergency response: Detecting trapped survivors through rubble and classifying injury severity using START triage

Technical Specifications

The system achieves impressive performance metrics: pose estimation at 54K frames per second in Rust, breathing detection from 6-30 breaths per minute, heart rate detection from 40-120 beats per minute, and presence sensing with less than 1 millisecond latency.

The entire pipeline runs on inexpensive hardware. An ESP32 sensor mesh (as low as per node) can achieve full pose estimation, breathing, heartbeat, motion, and presence detection.

Open Source and Community Driven

RuView is built entirely in the open with extensive documentation including 62 Architecture Decision Records explaining each technical choice, 7 Domain-Driven Design models, and comprehensive build guides.

The project runs entirely locally with no internet connection, no cloud account, and no recurring fees. Docker deployment gets live sensing running in 30 seconds with no toolchain required.

For developers interested in the intersection of signal processing, machine learning, and edge computing, RuView represents a compelling demonstration of how AI can perceive the world through signals rather than pixels.