A breakthrough optimization technique called IndexCache is promising to dramatically accelerate inference on long-context AI models, delivering up to 1.82x faster time-to-first-token and 1.48x faster generation throughput. Developed by researchers at Tsinghua University and Z.ai, this innovation addresses one of the most persistent bottlenecks in deploying large language models at scale.

The Quadratic Scaling Problem

Modern language models rely on self-attention mechanisms to understand relationships between tokens in context. However, this computational approach scales quadratically with sequence length, meaning that doubling the context length quadruples the computational requirements. For applications demanding extended context windows??ocument analysis, multi-step agentic workflows, and complex reasoning tasks??his quadratic scaling creates significant practical barriers.

Sparse attention mechanisms offer a principled solution by having models select and attend only to the most relevant subset of tokens rather than processing every relationship. DeepSeek Sparse Attention (DSA), first introduced in DeepSeek-V3.2, implements this concept through a lightweight “lightning indexer module” at each model layer. These indexers score preceding tokens and select small batches for the main attention mechanism, reducing complexity from quadratic to linear.

The Hidden Indexer Bottleneck

Despite DSA’s efficiency gains, researchers identified a critical flaw: the indexer modules themselves operate at quadratic complexity at every single layer. As context lengths grow, the cumulative time spent running these indexers during the initial “prefill” stage skyrockets, partially negating the benefits of optimized attention computation.

The research team discovered a crucial characteristic of how DSA models process data: adjacent transformer layers share between 70% and 100% of their selected important tokens. This cross-layer redundancy presented an opportunity for optimization that the team seized with IndexCache.

How IndexCache Works

IndexCache partitions a model’s layers into two categories. A small number of “full” (F) layers retain their indexers, actively scoring tokens and selecting important ones for caching. The remaining “shared” (S) layers perform no indexing themselves, instead reusing cached indices from the nearest preceding F layer.

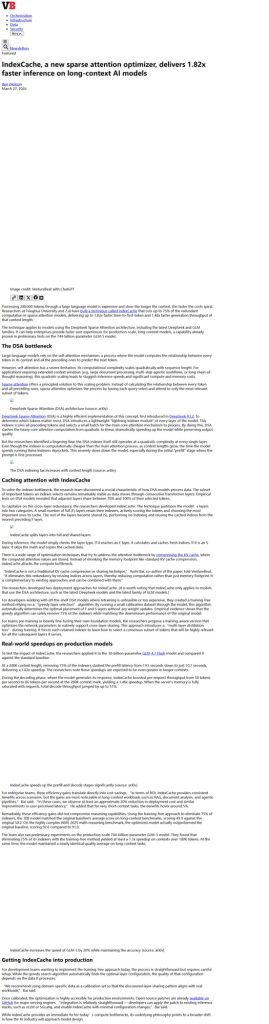

During inference, the model simply checks layer type. When encountering an F layer, it calculates fresh indices. When reaching an S layer, it skips computation entirely and copies cached data. This approach eliminates up to 75% of indexer computations while maintaining output quality.

Performance Results

Testing on the 30-billion-parameter GLM-4.7 Flash model revealed substantial improvements. At 200K context length, removing 75% of indexers reduced prefill latency from 19.5 seconds to just 10.7 seconds??epresenting a 1.82x speedup. During the decoding phase, per-request throughput improved from 58 tokens per second to 86 tokens per second, a 1.48x improvement. Total decode throughput increased by up to 51% under full memory saturation.

The efficiency gains extended to the massive 744-billion-parameter GLM-5 model, where eliminating 75% of indexers yielded at least 1.3x speedup on contexts exceeding 100K tokens. Remarkably, these optimizations did not compromise reasoning capabilities. The optimized model matched baseline performance on long-context benchmarks while actually outperforming on the challenging AIME 2025 math reasoning benchmark, scoring 92.6 compared to the original 91.0.

Enterprise Cost Implications

For organizations deploying long-context AI applications, IndexCache translates directly into operational savings. Yushi Bai, co-author of the research paper, estimates that users should expect approximately 20% reduction in deployment costs and similar improvements in perceived latency for long-context workloads. For short-context tasks, benefits hover around 5%.

The technique applies specifically to models using DeepSeek Sparse Attention architecture, including the latest DeepSeek and GLM model families. Organizations using these architectures can implement IndexCache through a training-free approach requiring only a calibration dataset to determine optimal layer configuration.

Production Deployment

Open-source implementations are already available on GitHub with patches for major serving engines including vLLM and SGLang. Integration requires minimal configuration changes, making the optimization accessible to teams without specialized infrastructure expertise.

The research team recommends using domain-specific calibration data to ensure discovered layer-sharing patterns align with actual workloads. Once calibrated, the optimization applies automatically during inference without additional user intervention.

Complementary to Existing Techniques

IndexCache differs from traditional KV cache compression approaches that reduce memory footprint. Instead, it attacks the computation bottleneck directly, reducing redundant mathematical operations rather than shrinking stored attention values. This complementary approach can combine with memory optimization techniques for compound efficiency gains.

Future Architecture Implications

The researchers anticipate that future foundation models will incorporate inference optimization considerations from the initial design stage. Rather than treating throughput and latency as afterthoughts, next-generation architectures will likely balance model scale with deployment efficiency, producing systems optimized for real-world computational constraints.

Conclusion

IndexCache represents a significant step forward in making long-context AI applications practical and economical. By recognizing and eliminating cross-layer redundancy in sparse attention mechanisms, researchers achieved substantial speedups without compromising output quality. For enterprises seeking to deploy sophisticated AI capabilities at scale, this technique offers a pathway to better performance and lower costs using existing hardware and models.