What if an AI agent could learn a new skill on the fly ??not by being retrained, but by writing its own instruction manual? That’s the core idea behind Memento-Skills, a groundbreaking new framework developed by researchers at multiple universities that enables AI agents to autonomously develop and refine their skills without ever touching the underlying model parameters.

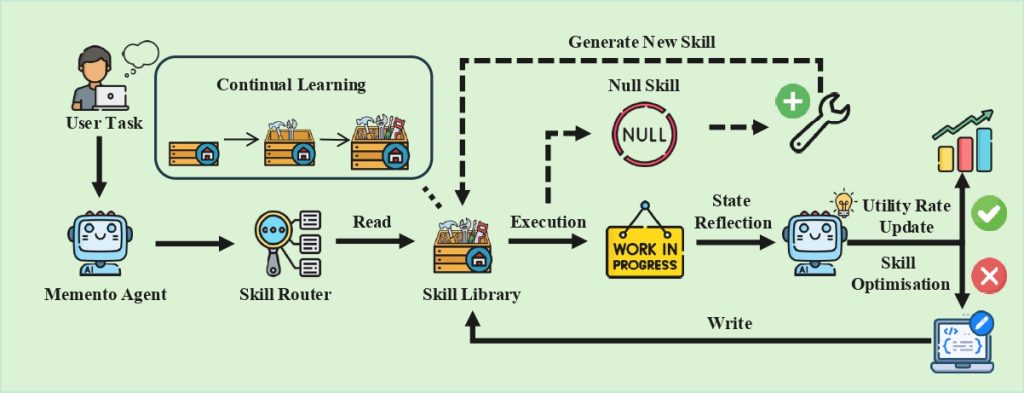

Traditional AI systems are static. You train them, deploy them, and they stay exactly as smart as the day they left the data center. Memento-Skills breaks this paradigm with a mechanism the researchers call Read-Write Reflective Learning ??a continuous loop where agents read from their existing skill library, execute tasks, reflect on outcomes, and write improvements back. The model weights never change. The agent just gets better at knowing what to do.

The Architecture of Self-Evolution

At its core, Memento-Skills operates on a deceptively simple insight: instead of updating the model’s parameters (which requires expensive retraining), store experience in an external skill memory. When a task comes in, the agent queries this memory ??either retrieving an existing skill or synthesizing a new one from scratch.

The framework starts with just five seed skills. From there, it grows autonomously. On the GAIA benchmark, Memento-Skills developed 41 distinct skills. On the more demanding Humanity’s Last Exam (HLE) benchmark, it ballooned to 235 skills ??all without human intervention in the skill creation process.

Central to this is a custom skill router that intelligently selects which skill to use for a given task. Unlike classic retrieval systems that can fall into a “retrieval trap” (where similar-looking queries yield suboptimal matches), Memento-Skills’ router evaluates task context before pulling from the library. This avoids the pitfall of applying yesterday’s solution to today’s subtly different problem.

Performance That Demands Attention

The numbers are striking. On the GAIA benchmark ??a general AI assistant evaluation covering real-world tasks ??Memento-Skills achieved 66% accuracy, compared to just 52.3% for a static baseline with the same underlying model. That’s a meaningful gap over a system that hasn’t been fine-tuned at all.

The gains are even more dramatic on Humanity’s Last Exam, a notoriously difficult benchmark designed to test AI reasoning at the limits. Memento-Skills more than doubled the baseline performance: from 17.9% to 38.7%. In AI research, doubling is rare. Doubling without retraining is nearly unheard of.

How Read-Write Reflective Learning Works

The “read-write” cycle deserves closer examination. When an agent receives a task:

- Read: The skill router queries the external skill memory for relevant prior skills.

- Execute: The agent applies the selected or newly generated skill to solve the task.

- Reflect: The system evaluates the outcome. Did it work? How well?

- Write: Based on reflection, the agent updates the skill library ??boosting the utility score of successful skills, or reorganizing and optimizing skills that underperformed.

This loop runs continuously. Every interaction is a learning opportunity. The agent isn’t just solving problems ??it’s cataloging how it solved them for future reference.

What This Means for AI Development

Memento-Skills represents a shift in how we think about AI capability expansion. Traditionally, making an AI smarter meant collecting more data, burning more GPU hours, and retraining. Memento-Skills suggests a third path: deployment-time learning that keeps the model frozen but the agent adaptive.

This has massive practical implications. Organizations could deploy a single base model and let it specialize in real-time for their specific use cases ??no retraining pipelines, no GPU clusters, no versioned model releases. The agent learns from live interactions and stores that knowledge externally.

Open Source and Ready to Explore

The Memento-Skills project is fully open source, available on GitHub at Memento-Teams/Memento-Skills. The repository includes the full framework, documentation, learning results, and installation scripts for both GUI and developer-oriented setups.

There’s also a live demo at skills.memento.run where you can see the framework in action, and a Discord community for those looking to contribute or discuss the project.

Looking Ahead

The researchers behind Memento-Skills are clear that this is not a finished product ??it’s a new paradigm. The v0.2.0 release brought major architectural improvements including a Bounded Context redesign and execution phase refactoring, signaling that the framework is actively evolving.

But the core thesis is now validated: AI agents can rewrite their own capabilities without retraining. The question is no longer whether deployment-time learning works, but how far it can go. With Memento-Skills, we may be watching the first credible answer to that question.

Explore the project on GitHub and learn more at memento.run.